Artificial intelligence (AI) isn’t any longer an emerging technology in Southeast Asia.

Countries across the region are aggressively adopting AI systems for all the pieces from smart city surveillance to credit scoring apps, promising more financial inclusion.

But there are growing rumblings that this headlong rush towards automation is outpacing ethical checks and balances. Looming over glowing guarantees of precision and objectivity is the spectre of algorithmic bias.

AI bias refers to cases where automated systems produce discriminatory results on account of technical limitations or issues with the underlying data or development process. This can propagate unfair prejudices against vulnerable demographic groups.

For instance, a facial recognition tool trained predominantly on Caucasian faces can have drastically lower accuracy at identifying Southeast Asian individuals.

As Southeast Asia attempts to navigate the brand new terrain of automated decision-making, this text delves into the swelling chorus of dissent questioning whether Southeast Asia’s AI ascent could leave marginalised communities even further behind.

How bias creates discrimination

In Southeast Asia, the prevalence of AI bias is clear in various forms, comparable to flawed speech and image recognition, in addition to biased credit risk assessments.

These algorithmic biases often result in unjust outcomes, disproportionately affecting minority ethnic groups.

A notable example from Indonesia demonstrates this. An AI-based job advice system unintentionally excluded women from certain job opportunities, a results of historical biases ingrained in the info.

The diversity of the region, with its array of languages, skin tones and cultural nuances, often gets neglected or inaccurately represented in AI models that depend on Western-centric training data.

Consequently, these AI systems, which are sometimes perceived as neutral and objective, inadvertently perpetuate real-world inequalities quite than eliminating them.

Ethical implications

The rapid evolution of technology in Southeast Asia presents significant ethical challenges in AI applications, due largely to the breakneck pace at which automation and other advanced technologies are being adopted.

This rapid adoption outpaces the event of ethical guidelines.

Shutterstock

Limited local involvement in AI development sidelines critical regional expertise and widens the democracy deficit

The “democracy deficit” refers back to the lack of public participation in AI decision-making – facial recognition rolled out by governments without consulting impacted communities being one case.

For example, Indigenous groups just like the Aeta within the Philippines are already marginalised and will face particular threats from unchecked automation. Without data or input from rural Indigenous communities, they may very well be excluded from AI opportunities.

Meanwhile, biased data sets and algorithms risk exacerbating discrimination. The region’s colonial history and continuous marginalisation of Indigenous communities casts a big shadow.

The uncritical implementation of automated decision-making, without addressing underlying historical inequalities and the potential for AI to strengthen discriminatory patterns, presents a profound ethical concern.

Regulatory frameworks lag behind the swift pace of technological implementation, leaving vulnerable ethnic and rural communities to cope with harmful AI errors without recourse.

Geopolitical dynamics

Southeast Asia finds itself at an important juncture, strategically positioned at the center of AI advancements and geopolitical interests.

Both the United States and China seemingly leverage artificial intelligence (AI) to expand their influence within the region.

During President Biden’s 2023 trip to Vietnam, the US government revealed initiatives for increased collaboration and investment by American corporations, including Microsoft, Nvidia and Google, in Southeast Asian countries to achieve access to data and engineering talent. This data and talent is seen as crucial for training advanced AI systems.

At the identical time, China has been rapidly investing in digital infrastructure projects within the region through its Belt and Road Initiative, sparking concerns about technological colonialism.

There are also worries that Southeast Asia may turn into a battleground for US–China AI competition, escalating security tensions and risks of an AI arms race.

With major powers vying for economic, military and ideological influence, Southeast Asian nations face complex challenges in managing these multifaceted interests around AI.

Crafting policies that balance advantages and risks, while maintaining autonomy, will probably be critical.

The path ahead: caution mixed with optimism

Considering Southeast Asia’s immense diversity of ethnicity, languages and socio-cultural traditions, the region has each unique vulnerabilities but additionally tremendous opportunities regarding AI ethics.

Constructing more inclusive technological futures requires sustained collaboration across governments, firms and community groups.

No single prescription can “solve” algorithmic bias, but emphasising representation, accountability and transparency will point the way in which.

In Southeast Asia, civil society groups and scholars are increasingly vocal concerning the need for guardrails on AI adoption, higher representation in datasets and protections against automated discrimination.

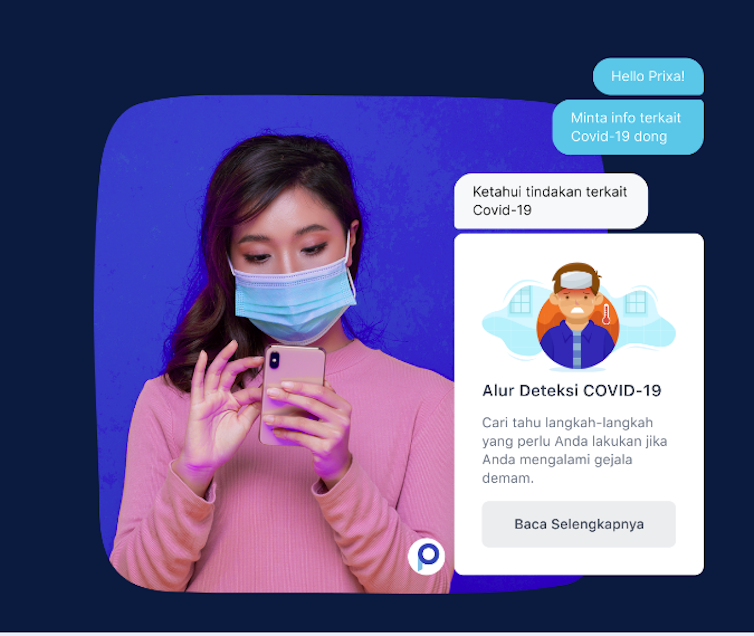

https://kata.ai/

While there are growing variety of local start-ups contributing to regional specific AI-based technologies, comparable to Kata.AI in Indonesian language, the primary natural language processing algorithms in Indonesia, or Bindez in Myanmar, more is required to make sure local experts contribute to nuanced AI system tailored for Southeast Asia.

GSMA Mobile for Development Impact Report 2015

To support this vision, more funding and collaboration must be fostered not only between ASEAN members, but additionally with global experts on AI technology.

Fundamentally, the trail ahead necessitates vigilance. Technologies don’t stand aside from the societies shaping them.

Therefore, in questioning pervasive assumptions encoded in AI systems, perhaps we move closer towards the emancipatory promise of automation. Ensuring all voices are heard, not only the privileged and powerful, stays vital even in our algorithmic age.

This article was originally published at theconversation.com